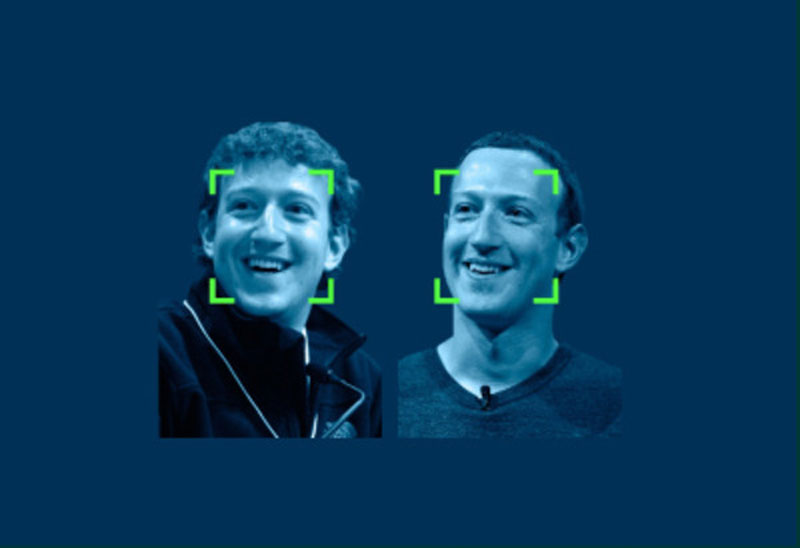

Was the social media meme calling on people to share a picture of themselves from 10 years ago alongside a current photo of themselves a scheme to help train Facebook’s facial recognition algorithms?

Millions of photos were made easily minable through a single unique hashtag that conveniently juxtaposed two images of the same person looking quite different. In the quest for accurate facial recognition this could be a gold mine of opportunity allowing machines to focus on the underlying relatively timeless features of a face.

We don’t know if Facebook started the meme, but its rapid spread raises questions about whether Facebook may have helped this meme to gain and retain a surprising level of prominence.

See what I’ve done there?

I’ve got you thinking, haven’t I? And I’ve made you suspicious of the meme and of Facebook.

I’m not an expert in facial recognition and I’ve presented you with hardly any facts. Reading the first three paragraphs it’s easy to think that I’m claiming Facebook manipulated you. Read them again. I made no such claim, instead I merely raised the question.

I’ve done this to make a point about how rumours spread, and opinions are formed, but its surprisingly powerful. Even now as I’m pointing this out to you, you’re still thinking Facebook may have done it.

Does that really make sense?

Facebook has many billions of photos of people in every imaginable situation already helpfully tagged with the person’s identity and for 100’s of millions of people this massive trove of photos already spans well over 10 years. Do you really think the relatively tiny number of photos shared as part of the 10-year challenge would make a difference? It was a drop in the ocean. Then again, why are you listening to me? I’m not a facial recognition expert and again my argument lacks meaningful facts.

We’re all gullible and easily primed to believe things.

“Did the shipping company cut corners on safety? How else do you explain the fatalities?” “Are UK Port State inspectors unfairly targeting EU-flagged vessels in the runup to Brexit?”

Now you’re thinking, and you’re primed. You’re not sure. If you see a social media post or a news article that appears to raise the same question, you’ll be a little more likely to believe that there’s been wrongdoing. Ten more sources raising the same question and you’ll believe the shipping company is negligent and the inspectors are malicious all without a shred of evidence.

So, in crisis communications what do we do about this human willingness to believe?

- Anticipate possible loaded questions and indirectly answer them before they’re ever asked.

- Build a reputation over time so people will be primed to believe good things about you and to doubt the negatives.

- Ensure a flow of information early on to discourage speculation and to fill the media landscape with your side of the story which makes it harder for attacks to take hold.

- Accept that you’ll never be 100% successful and know when to ignore baseless allegations and speculation.

Public opinion is easily swayed but once swayed it is very difficult to undo the damage. I bet you’re still wondering if Facebook might have used the 10-year challenge to train its facial recognition software.